Boycott AI and You Hand It To Planet Wreckers

We Need To Steer AI in a More Ethical Direction

AI is killing the planet. And using it means you’re supporting billionaire tech bros who don’t care who or what gets harmed.

That’s the story being shared by environmental and ethical groups right now. And while there’s some truth in it, the call to boycott AI is not just unhelpful — it may be one of the most consequential mistakes that people with good values could make.

Because the world desperately needs earth-friendly, compassionate people to embrace AI, not boycott it.

You can only shape something from the inside. And right now, AI is already spiralling — fast.

Please watch the video (I’d be very grateful for a like and subscribe), then we’ll explore the subject in more detail…

This Is Already Happening, Whether You Participate or Not

Artificial Intelligence is transforming every aspect of human civilisation. Not might. Not could. Already is.

And the pace is accelerating in ways that most people haven’t fully absorbed. Within the next few years, AI will fundamentally reshape healthcare, education, agriculture, energy systems, transportation, politics and law - not to mention the impact it will have on people’s jobs and careers on a scale we haven’t seen since the Industrial Revolution.

The question isn’t whether AI will transform our world. That’s settled.

The question is: Whose values will it amplify?

AI Is Being Weaponised

Right now, while thoughtful people debate the ethics of engaging with AI, politically-charged elites are spending hundreds of millions of dollars weaponising it.

They are using AI to generate thousands of pieces of targeted political content every single day. They’re creating deepfake videos and fabricated news articles that spread lies about everything from climate change and renewable energy, to immigration and human rights.

Around half a million deepfakes were shared on social media in 2023 - a figure that had grown to approximately 8 million by 2025, according to cybersecurity firm DeepStrike, representing annual growth of nearly 900%.

Research tracking a Russia-linked propaganda site found that when it adopted AI tools, it was able to generate significantly larger quantities of disinformation, with measurable shifts in both volume and breadth of content.

If current trends continue, the majority of political content online will soon be AI-generated. And right now, overwhelmingly, it’s being generated by people who do not share progressive values.

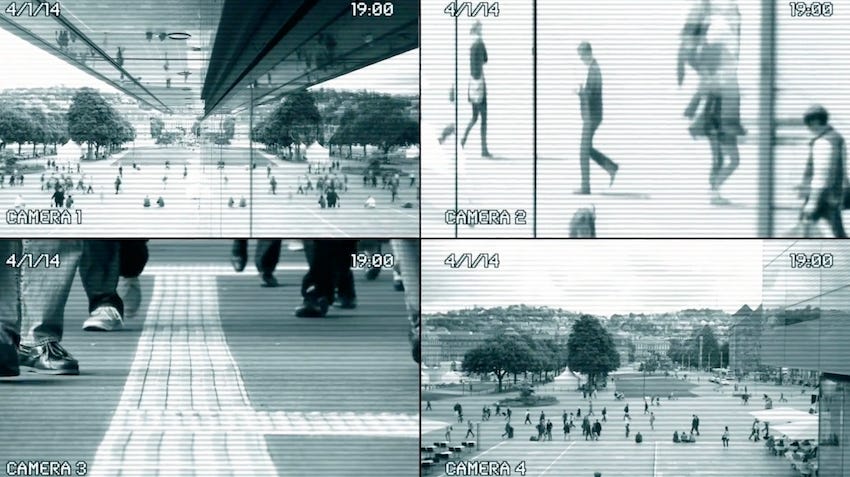

In the Wrong Hands, AI Becomes a Machine for Control

In the hands of authoritarians, AI becomes mass surveillance - systems that track and suppress dissent. Facial recognition that targets protesters and marginalised communities. Propaganda machines engineered to trigger fear and hatred.

This isn’t speculative. A recent report by the Australian Strategic Policy Institute found that China is using AI to automate censorship, enhance surveillance, and pre-emptively suppress dissent - with the technology predicting public demonstrations before they happen and monitoring prisoners’ facial expressions for signs of anger.

Research from MIT found that in China, the government has increasingly deployed AI-driven facial recognition to suppress dissent, has been successful at limiting protest, and in doing so has spurred the development of still better AI-based tools - a feedback loop that MIT researchers have called an “AI-tocracy.”

And this technology is being exported. In Afghanistan, some 90,000 Chinese-manufactured cameras equipped with facial recognition have been installed in Kabul, helping the Taliban enforce gender apartheid policies and suppress any attempt at public protest.

Iran followed suit in 2022 during the women’s rights protests, announcing plans to deploy facial recognition linked to its extensive biometric database to police every aspect of women’s public behaviour.

Environmental harms get amplified too: more optimised illegal logging, more sophisticated wildlife trafficking, more efficient overfishing, more ruthless fossil fuel extraction.

AI doesn’t have values. It amplifies whatever values its users bring to it.

In the Right Hands, It’s A Force For Good

AI has the potential to level the playing field in ways that have never before been possible. It can coordinate grassroots movements across thousands of communities simultaneously. It can translate campaign materials into dozens of languages instantly - making local movements truly global.

It can fact-check misinformation in real time. It can identify patterns of voter suppression and corruption that humans would simply miss in the noise.

Put AI in the hands of people who actually care about outcomes beyond profit, and you get smarter power grids that waste less energy and integrate more renewables.

Better forecasting for floods, wildfires, and heatwaves. Sharper deforestation monitoring and faster detection of illegal activity. Better climate modelling. Better battery research. Better ways to track pollution, optimise logistics, reduce waste, and protect habitats.

Research from the Grantham Institute at the London School of Economics estimates that if AI is effectively applied in key areas, it could reduce global emissions by 3.2 to 5.4 billion tonnes of CO₂- equivalent per year by 2035 - outweighing the emissions from data centres by a significant margin.

The difference between these two futures comes down to this: who builds it, who controls it, and who uses it.

Let’s Address the Environmental Concern Head-On

Because it’s real, and it matters, and it deserves an honest answer.

Training large AI models does use serious energy. Data centres currently account for around 0.5% of global combustion emissions, though the IEA projects this could rise to 1–1.4% by 2030 - making it one of the few sectors where emissions are set to grow. That’s a legitimate concern, and anyone who dismisses it isn’t being straight with you.

But context matters enormously here.

Livestock production alone accounts for around 14.5% of global human-made emissions - comparable to all direct emissions from global transport, according to the UN Food and Agriculture Organisation.

The fashion industry causes around 10% of global carbon emissions, and is on track to grow its footprint by around 60% by 2030.

And bitcoin alone burns through more electricity each year than entire countries, like Norway and Argentina.

The Department of Defense in the US - to take one striking example - is the world’s largest institutional user of petroleum and the single largest institutional producer of greenhouse gases among public institutions.

War creates horrendous emissions, among all other evils of conflict.

But nobody is boycotting their wardrobes or their banking apps on environmental grounds with the same intensity being directed at AI.

Critically, you can refuse to use AI on environmental grounds, but the result won’t be less AI usage. It will just mean that 100% of political AI is in the hands of people who don’t care about the environment.

Meanwhile, progressive pressure is pushing for change. Google has matched 100% of its electricity consumption with renewable energy every year since 2017, and Microsoft announced in early 2026 that it had matched 100% of its 2025 global electricity with renewables - with both companies targeting 24/7 carbon-free energy by 2030.

Those milestones happened because environmentally conscious people engaged with the technology and demanded better practices. Boycotts don’t create that pressure. Engagement does.

The Clock Is Ticking

We are racing against time to influence the direction of artificial intelligence. And one side is already miles ahead.

While thoughtful people have been debating ethics, others have been building weapons. While some have been concerned about doing things the right way, others have been doing whatever it takes to win.

While some have been worried about compromising their values, others have been using AI to attack those very values at scale.

They’re not going to stop. They’re going to get faster, better, more sophisticated.

AI systems are enabling states and non-state actors to propagate disinformation and malicious narratives at a scale that was previously impossible. This is not a slow-moving threat. It is already reshaping political discourse in every country with a functioning internet.

If people who care about the planet, human rights and animal rights continue to sit this out, the future is already being decided. And it’s being decided without them.

This Is About Defending Your Values, Not Abandoning Them

The world needs more compassionate voices shaping AI. More voices that demand accountability. More voices that won’t accept the Silicon Valley doctrine of “move fast and break things” when the things being broken are vital ecosystems and people’s lives.

But you can’t shape it from outside. You can’t effectively influence what you don’t fully understand.

This isn’t about abandoning your values. It’s about defending them - in the arena where the fight is actually happening.

If you care about all forms of life on Earth, your voice is needed. Now. Your participation is needed.

Please, get more involved in the world of artificial intelligence. And help to make it a force for good.

The Truth About AI’s Environmental Impact

The International Energy Agency (IEA) estimates that data centres currently account for about 0.5% of global combustion emissions. This is projected to rise to 1 to 1.4% by 2030.

That’s small in percentage terms, but it does represent one of the few sectors where emissions are set to increase, while most others decarbonise. Data centres are among the fastest-growing sources of emissions globally.

There are also water concerns that often go unreported. For every kilowatt hour of energy a data centre consumes, roughly two litres of water are needed for cooling, according to MIT research. As more data centres are built - particularly in water-stressed regions - this becomes a concerning ecological issue.

Google’s greenhouse gas emissions surged nearly 50% between 2019 and 2023, largely due to AI. Microsoft’s rose nearly 30% since 2020.

The same IEA that documents AI’s growing emissions footprint also concludes that widespread adoption of AI in key sectors could lead to emissions reductions of 1,400 million tonnes of CO₂ per year by 2035.

The Grantham Institute at LSE calculates potential reductions of 3.2 to 5.4 billion tonnes of CO₂-equivalent per year - several times larger than the footprint of data centres.

AI is already being applied to improve grid management for renewables integration, optimise energy use in buildings, reduce food waste, and model climate systems with unprecedented precision.

A Google AI project that helps airline pilots avoid contrail-generating flight paths could alone, if adopted industry-wide, offset more emissions than the entire AI sector currently produces.

AI’s environmental impact is real, growing, and inadequately regulated. The answer to that is better regulation, stronger corporate accountability, and more voices demanding clean energy commitments.

The IEA’s projection that AI’s benefits could dwarf its footprint is of course conditional. It depends on who controls how AI is deployed, and whether the people making those decisions have environmental values.

That’s why this article and video has been making the case to engage more, not less.

Why We Need The Input of Environmentalists

The large language models and generative AI systems that are reshaping public life were built by a small number of extraordinarily well-funded companies, whose founding cultures were shaped by the view that technology is neutral, disruption is a good thing, and that whoever ships fastest wins.

The consequence of this culture is that AI systems were built with almost no meaningful input from environmental scientists, human rights lawyers, indigenous communities, public health experts, animal welfare researchers, or the billions of people in the Global South who will be most affected by both climate change and AI’s economic disruptions.

This is not a problem that boycotts can fix. It is a problem that only sustained, informed, purposeful engagement can address.

What Weaponised AI Actually Looks Like

Researchers studying a Russia-linked propaganda outlet found that after it adopted generative AI tools, it could produce significantly larger quantities of disinformation. This peer-reviewed evidence is published in PNAS Nexus (2025).

Generative AI doesn’t just speed up the production of individual pieces of content. It enables the kind of coordinated, multi-platform, high-volume information flooding that makes it nearly impossible for fact-checking organisations to keep up.

By the time a deepfake has been debunked, it has already been seen by millions and shared by thousands.

The “Liar’s Dividend”

There’s a subtler, and in some ways more dangerous, consequence of AI-generated disinformation. Researchers at the Brennan Center for Justice describe what they call the “liar’s dividend”.

As deepfakes and manipulated content become more sophisticated, bad actors can exploit the confusion, not just by creating false content ,but by dismissing real evidence as fake.

So, for example, a politician caught on camera saying something damaging can now plausibly claim the video was AI-generated. A genuine document exposing corruption can be dismissed as a fabrication. Truth itself becomes contested.

That’s the world we now inhabit. It’s a new threat to democracy, and it accelerates as AI improves.

The Surveillance Machine

China’s surveillance technology companies have sold facial recognition and mass surveillance infrastructure to dozens of countries. The Carnegie Endowment for International Peace has tracked AI surveillance technology deployment across at least 75 countries, with authoritarian governments disproportionately represented among the buyers - and Chinese companies, particularly Huawei, supplying the technology to at least 50 of them.

In Afghanistan, approximately 90,000 Chinese-manufactured cameras with facial recognition capability have been installed across Kabul, giving the Taliban a surveillance apparatus that would have been technically inconceivable a decade ago.

Iran deployed AI facial recognition specifically to identify women participating in the 2022 protests against the country’s gender apartheid policies.

The commercial AI ecosystem - driven by profit, not values - is actively enabling authoritarianism at scale, and nobody is going to stop that from outside the system.

What Progressive AI Engagement Actually Looks Like

This is the part that’s most underdeveloped in public conversation - and this is the area we need to improve and accelerate. Here are some examples of how AI can amplify progressive values…

Disinformation Response

One of the most urgent applications is using AI to fight the AI-generated disinformation described above. Fact-checking organisations are already exploring AI tools that can identify deepfakes, flag suspicious content patterns, and trace the origins of viral fabrications.

The challenge is that well-resourced disinformation operations are currently outpacing underfunded fact-checkers. More progressive investment in this space - both financial and intellectual - is desperately needed.

Environmental Monitoring

AI is already being used for deforestation monitoring (Global Forest Watch uses machine learning to track tree cover loss in near real time), wildlife trafficking detection, illegal fishing identification through satellite data analysis, and tracking corporate emissions.

These tools already exist and they’re being used. But they could be scaled significantly with more engagement from the environmental community.

Grassroots Organising

AI translation tools are already transforming what’s possible for global grassroots movements. Campaign materials, legal documents, and educational content can now be translated into dozens of languages at very little cost - a development that dramatically lowers the barrier to truly international coordination.

The practical challenge is that many grassroots organisations lack the capacity or confidence to incorporate these tools. This is where AI-literate people within progressive movements can make an immediate difference.

Climate Modelling and Policy

Some of the most significant climate science of the next decade is expected to be shaped by AI - in the modelling of tipping points, the prediction of extreme weather events, the identification of optimal carbon capture sites, and the optimisation of renewable energy systems.

Uncomfortable Questions

We should be clear about the concerns of progressive engagement with AI companies. The history of corporate ethics engagement is mixed at best.

Environmental consultants have worked inside fossil fuel companies for decades without fundamentally changing those companies’ core behaviour.

Human rights advisors have consulted for social media platforms that continued to host hate speech and disinformation.

There’s a legitimate question about whether individual engagement with AI systems meaningfully influences how those systems are built and governed.

Individual usage probably has minimal influence. But organised, vocal, collective engagement has more.

The scale of potential job losses through the advancing intelligence and capabilities of AI is also alarming.

It’s a reminder that progressive AI engagement cannot only be about using the tools - it also has to include support for policies that protect workers, and distribute AI’s economic benefits more broadly.

The Time Is Now

The battle over AI’s values is not settled. The governance frameworks are still being written. The regulatory decisions are still being made. The public understanding of what these systems are and what they should be accountable for is still being formed.

The researchers, communicators and anyone who engages with these questions right now have an ability to influence the outcomes.

But this window will not stay open indefinitely. The time to shape AI is now.

Which means the cost of sitting it out, for people who care about what happens to this planet and all life on it, is potentially enormous.

All content on this website, which is hosted on the Substack platform, is free of charge to read, view and listen to.

But it does cost money to keep this vital mission going, so please, if you are able, have a look at the ways in which you can help out, by following the link below.